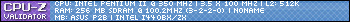

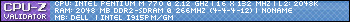

I agree. For me, the change from single-core to multi-core happened for me as I moved away from Windows XP and into WIndows Vista. I've never really used a computer running Windows XP that had more than a single-core processor. Vista ran great with a Core 2 Duo @2.4GHz and 4GB of DDR2-800. My current media server is using a quad-core Xeon E5450 modded to work on LGA775 motherboard and 4GB of DDR2-800...and it works perfectly fine. My mother's PC is running a Core 2 Quad Q9650 (essentially the same as the E5450), 8GB of DDR3-1333, and a boot 240GB SSD paired with a 1TB storage HDD and it runs Windows 10 just fine. It started out with a Core 2 Duo and only 4GB of memory and it did actually work, though boot times were 3x longer without the SSD.

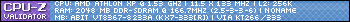

My Netbook has an Intel Atom N450 single-core 1.6GHz processor with hyper-threading (giving me only 2 threads) and it's running Windows 10 Home.... but it's horribly slow (obviously). It's so slow, even the SSD I use as the drive isn't really able to help outside of the first boot of the system. To use that netbook for anything, I have to start it up and then wait about 10 minutes for all background shit processes to finish grinding the system to a crawl. It also has only 2GB of DDR3 memory, so that probably has an effect on the system's slowness. Windows 7 Starter wasn't much better than Windows 10. In fact, W10 boots faster at least. I assume this netbook would be perfectly fine if I were to put Windows XP on it and put the old hard drive back in.

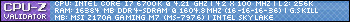

What does all that mean? I would say that Windows 10 - to be usable - would require AT LEAST a dual-core CPU and at least 4GB of RAM. Considering that lets you go all the way back to 2007/8, that's pretty impressive. It's also why I like poking around the LGA775 era tech, because they're still relatively cheap and you can build a working system with a modern OS... it just won't play 2019 AAA game titles, as you limit yourself to PCI-Express 1.1 speeds.