F2bnp wrote:I don't really agree with that sentiment. It's definitely the more power efficient architecture over Hawaii and Fiji, but you are overestimating how fast the end products were. GTX 980 sure was a little faster, but it also cost 550$. GTX 970 was on par with R9 290 and R9 290x, albeit with a lower power consumption. As far as value goes, you could regularly find sales for the R9 290 for ~250$ and I got mine for 280E (damn you VAT 😵 ). The GTX 960 was particularly expensive for what it was IMO and the R9 280/280x and especially R9 380 where the cards with the most performance/$.

See, that's where we won't get along. I'm an engineer, I look at the technical merits of an architecture. To me, the pricetag is nothing more than an arbitrary number that the IHV sticks on its products. It's completely irrelevant to the technology.

AMD puts lower pricetags on their technology... yea, whatever. nVidia *could* do the same, since their technology is more advanced and more efficient, and therefore cheaper to produce. They're more expensive because that's what you can do when you have the technological edge.

There's no reason for them to compete on price. GTX960 and GTX970 have been by far the best-sold GPUs of the past year, and are the most popular by a margin on eg Steam HW survey: http://store.steampowered.com/hwsurvey/videocard/

I think you can interpret that as the market saying nVidia's prices are just fine.

F2bnp wrote:Are those effects missing on AMD hardware? Never heard of that to be honest!

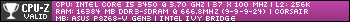

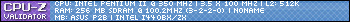

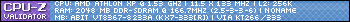

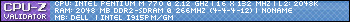

Yup, you only get them on nVidia hardware at this point (and in theory on Intel, but I don't think their iGPUs would perform well enough anyway):

http://steamcommunity.com/games/391220/announ … 690772757808963

Adds NVIDIA VXAO Ambient Occlusion technology. This is the world’s most advanced real-time AO solution, specifically developed for NVIDIA Maxwell hardware. (Steam Only)

Here is more background information on VXAO: https://developer.nvidia.com/vxao-voxel-ambient-occlusion

F2bnp wrote:No, this is not bias. The fact of the matter is that Nvidia gave false specs to the public and journalists and only answered truthfully when they were apprehended about it.

No, they weren't 'false', they were 'not sufficiently detailed' at best. The bias is in people claiming it's false and deceptive and whatnot.

nVidia probably thought it wasn't an important detail, as you wouldn't notice anyway. And they were right. The GTX970 cards were reviewed, and nobody noticed anything. It wasn't until some people started poking around with debugging tools that they saw the 3.5 GB-figure pop up somewhere, and didn't know how to interpret it.

F2bnp wrote:Again, I disagree. The TressFX issue you speak of was in Tomb Raider (2013) and was fixed almost immediately.

By nVidia, not by AMD.

Perhaps AMD should also 'fix' the 'issues' with GameWorks.

F2bnp wrote:By comparison, every Gaming Evolved title I can think of runs fine on Nvidia hardware.

That's what happens when you write decent drivers, apply per-game fixes and optimizations where necessary, and design GPUs that perform well overall, rather than just in select scenarios.

F2bnp wrote: It gives a small boost to AMD hardware, but it doesn't destroy performance on Nvidia. That has been the case since the early 00's anyway with "The Way It's Meant To Be Played" and other marketing crap like that.

I can name plenty of "The Way It's Meant To Be Played"-titles that work fine on AMD hardware... Problem is, it took AMD 1 or 2 more generations of GPUs to fix their problems before those games started performing.

F2bnp wrote:Not saying I like Gaming Evolved, again I'm not fond of PC Gaming getting fragmented like that, but I can't deny that it is far more acceptable than what Nvidia is doing.

It's exactly the same, it just doesn't hurt nVidia as much, because nVidia have their act together, as said above.

F2bnp wrote:And you had to bring up Tesselation, which has multiple examples across quite a few games in which Tesselation was hampering even Nvidia's performance for no perceptible IQ gain, just in order to show AMD in a negative light. I was shocked when I saw it on Crysis 2 and I was shocked that they did it again with Witcher 3.

Funny you mention Crysis 2. That's exactly one of those games where AMD's current GPU architecture was hurt, but the generation after that actually outperformed even nVidia's offerings at the time.

Why? Because AMD's early tessellators sucked a great deal. They then copy-pasted 4 of them into the next GPU... It didn't scale as well as nVidia's in extreme situations, but it was good enough for the moderate tessellation that Crysis 2 performed. Had they gone all-out on tessellation in Crysis 2, AMD's hardware would still have been in trouble.

F2bnp wrote:Can't say I really care about PhysX, seems like a gimmick.

That's just one of those things that AMD is in the way of. PhysX is pretty awesome technology, but because it is limited to nVidia-only, it won't see widespread use in games. If either AMD drops out of the market, or a vendor-neutral version of GPU physics would arrive, then games can make full use of it, without having to worry about it not working on some machines.

Which means PhysX is limited to only bolt-on gimmick effects. Still pretty cool, but not reaching the full potential of GPU physics as a concept.

F2bnp wrote:Now had it been open sourced and available to everyone, I think we'd have seen some really cool shit with it. That still saddens me somewhat 🙁.

Why would it have to be open source? AMD can implement CUDA at any time (see http://www.techradar.com/news/computing-compo … hnology--612041), and run PhysX on their GPUs. CUDA is open, the compiler is even open sourced. All AMD would have to do is write a back-end for their GPUs.

F2bnp wrote:Okay, can we stop with the insults?

I'm not insulting you. You obviously just copied that rhetoric from elsewhere. I've seen it surface numerous times. It's all part of the AMD propaganda machine.

F2bnp wrote:I'm wasn't talking about VRAM sizes although I don't see why I shouldn't. GTX 680/770 and 780/780Ti all launching with 2GB and 3GB versions respectively isn't doing Nvidia any favors. They should have had more VRAM, since AMD products did.

Not to mention that GTX 960 2GB VRAM in January 2015 😵 .

So? You get what you pay for. If you buy a 2 GB card, you should expect it to perform as a 2 GB card. Which it does.

Trying to spin that as "planned obsolescence" is quite sad, desperate even.

F2bnp wrote:It's not just the VRAM though. If you take a look at reviews from TechPowerUp (choosing this site since it has very nice "Relative Performance" charts for multiple resolutions and videocards) and compare 2012/2013 to nowadays, you'll see 280X catching up on GTX 780 even!

Yea, I've seen that theory. One AMD fanboy even posted it directly on my own blog. It's total conjecture. See, the problem with the TechPowerUp relative performance charts is that you cannot compare them from one review to the next.

Namely, TechPowerUp doesn't use the exact same set of games, the same drivers, OS, CPU etc when doing these charts.

Obviously, changing any of these parameters, especially the set of games, will result in different "Relative performance" figures.

"280X catching up to GTX780" could simply be a result of TechPowerUp having dropped some games from the set that were unfavourable to the 280X, and added some new games that are more favourable.

Or they could have moved to a different CPU, which suits the driver for the 280X better than the GTX780.

Just some examples of why you can't compare these numbers.

Anyway, the problem you're now facing is that you've mentioned a number of common points often brought forward by AMD fanboys. So I'm not sure if that's just coincidence, or if you're part of that group of AMD people that spread this nonsense across the web, like the guy that posted pretty much the exact same things on my blog some weeks ago.