Grzyb wrote on 2023-02-06, 03:27:

Jo22 wrote on 2023-02-05, 20:50:

If you, the user, merely has a lousy 1MB in your AT class computer, then you're better off running CP/M, anyway.

I would say that an AT with 1 MB RAM is already asking for a protected mode OS.

It depends, I think. 640KB were not enough to most DOS programs alone, single-tasked.

I'm not eager to see how a multi-tasking scenario would look (struggle) here.

Expanded Memory came to help early on to circumvent the limited address space. To help DOS.

One of my AST RAMPage boards from the 80s is fully equipped, it has 2MB.

That's something meaningful, something to work with.

And that was a period-correct config, I didn't add anything.

A real OS, using Protected Memory, would nolonger need to rely on this kind of bank-switching entirely.

The hardware could directly address the memory, if the physical bus allows addressing it (16-Bit ISA).

That's essentially what DOS extenders did.

They were miniature OSes, bridging the Real-Mode DOS/BIOS and Protected-Mode.

Himem.sys was like a gripper arm - operated by DOS applications, it reached out of the 1MB boundary.

Where it grabbed a small block of money and brought it back. The specification for this was named XMS.

This worked by Himem.sys either going into Protected Mode and back or by using LOADALL.

The original PC/AT BIOS had the ability to access Extended Memory, as well.

There was a function via int 15h, which did the required Proctected-Mode/Real-Mode switch.

However, it was cumbersome to use and slow by comparison.

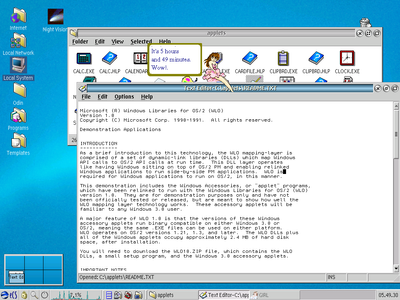

PS: By the way - Windows 1.x and 2.x applications were constantly running out of memory, way back in the 80s.

Of course, a home user could cramp Windows itself into the memory of a 640KB PC/XT or 1MB AT/286 and it would run.

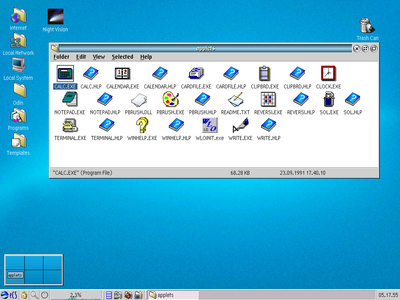

But what about the programs? I'm not talking about calc.exe, notepad.exe and clock.exe.

Let's think of Aldus Page Maker or PC Paintbrush. Without the help of Expanded Memory, most projects couldn't be loaded.

That's why they supported it. 1MB or rather, 640KB base + 384KB extended may have not been enough in practice.

The 640KB are already being used up by DOS, Windows and the application, leaving the remaining memory to the application's working data.

If Shadow Memory was enabled, at least 64KB would be lost to BIOS, then 24 to 32KB to the VGA BIOS.

Leaving about 300KB.. This may or may not have been sufficient for the DTP work.

But if a 20 page magazine with graphics was being written/designed in Page Maker, then maybe more memory was needed.

Also, what about networking - large projects may not fit on floppies, but could be shared via a simple network.

Even if it merely was a null-modem connection and some shareware program for a remote drive.

Such a network utility needs how much ? Another 50 to 150KB of memory ?

The font files must also be considered. In the Windows 1.x and 2.x days, the fonts on screen did depend on the printer drivers.

So if you forgot to install a printer during Windows installation, many important fonts were not installed/loaded.

I'm assuming that's what many retro people don't know these days when they try out 80s Windows first time.

They likely select printer: none during setup instead of selecting HP Deskjet connected to a file output.

Edit: Here's a link to an older posting of mine about the requirements for Aldus Page Maker on Windows 2.0 (it shipped with a Windows 2 runtime).

It's not a prime example for Windows applications, maybe, but it is at least is an evidence that I'm not making things up.

Re: Feeding low end RAM to the scrappers?

I'm not angry (not about you, not in general), nor do I want to be right all the time.

In fact, I'm a person who had made many mistakes in life, far from being perfect.

I'm merely speaking in defense here, to prove that there sometimes existed

a real need for a few MBs of RAM in the DOS/Windows days.

Before OS/2 and strong multi-tasking were a thing.

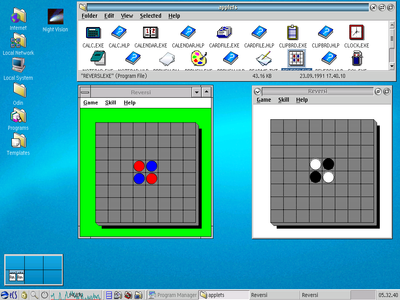

The multi-tasking operation in 16-Bit Windows was more like multi-application operation, really.

With lightweight programs, the cooperative multitasking model worked fine. Even quicker, often.

Say, running clock, writing a letter in WinWord, using calculator and have the terminal in background receiving a document by your agency via acoustic coupler.

That really worked.

But once you ran Gupta's SQLWindows simultaneously, maybe not so anymore.

One program in foreground might have been using so much resources that the other programs froze.

OS/2 was trying to fix this, among other things.

That's why I found Willow so fascinating, as a concept.

One and the same program could run on a a simple PC with 16-Bit Windows, and in a professional environment on OS/2.

If only IBM hadn't messed this up. *sigh*

Grzyb wrote on 2023-02-06, 03:27:[..] A real mode OS is limited to 640 KB, or 704 KB with the HMA.

Let's assume the OS occupies 100 KB.

So, we're left with 540 o […]

Show full quote

Jo22 wrote on 2023-02-05, 20:50:

If you, the user, merely has a lousy 1MB in your AT class computer, then you're better off running CP/M, anyway.

[..] A real mode OS is limited to 640 KB, or 704 KB with the HMA.

Let's assume the OS occupies 100 KB.

So, we're left with 540 or 604 KB free for the userland.

I see. We're still sitting in the sand pit with our CGA PC-clone. C64 and ZX Spectrum are watching us.

Grzyb wrote on 2023-02-06, 03:27:

Jo22 wrote on 2023-02-05, 20:50:

If you, the user, merely has a lousy 1MB in your AT class computer, then you're better off running CP/M, anyway.

[..] Of course, a protected mode OS needs more memory - let's assume 200 or 300 KB.

The result would be: 724 or 824 KB of free memory. PROFIT!

Cool. We now can run many C64 programs at once. And one or two PC applications, alternatively.

If not, uncle MemMaker and aunt Qemm will come help us. 🙄

Just kidding. 😀

But you still think in a DOS box (pun intended), it seems to me. 🙁

As if a lousy megabyte was somehow great if it only was wisely used, I mean.

That wasn't the case. Ataris and Amigas with 512KB were running out of memory already,

the planned 256KB Atari ST model of the mid-80s couldn't leave factory, even.

Of course, they didn't have the 640KB barrier, either.

So there was no need to keep memory count low from the manufacturer's side.

What comes to mind in this context:

Between 1987 an 1990, 1 MB, 2MB and 4MB versions of the Mega ST were being sold.

Older releases were being updated (retrofitted) by their users, as needed.

https://www.old-computers.com/museum/computer.asp?st=1&c=165

http://www.computinghistory.org.uk/det/48869/Atari-Mega-4/

Of course, the Mega series was not aimed at the home users, at the children playing Monkey Island.

But these models existed, were not exotic. Their higher amount of memory was useful, if not required by an application.

There also were multi-users versions of TOS that either required the extra memory or could use it well. MagiC, MiNT, Geneva etc.

Source: http://www.mbernstein.de/atari/system/overview.htm

When it comes to PCs, what I really mean to say (please let me explain my point of view):

Once you start to virtualize and buffer physical devices in an OS, you need some buffers in RAM.

- There are multiple background processes running (scheduler, kernal, spooler etc)

- The OS has to save/restore the video memory (4KB up to 256KB)

- It must run copies of the command line interpreter, one or each session/terminal

- There are environment variables to keep care of

- Network stack needs space; the ethernet card may needs a larger i/o space in memory, as well.

- ...

And that's merely for a DOS-style "OS". A weird construct which uses software interrupts for its API.

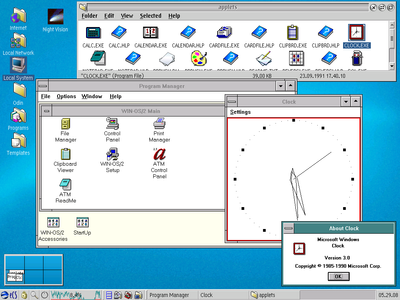

If we're going graphical, we may quickly encounter so-called dynamic link libraries.

Each of those little beings contains functions, sub routines so to say.

(OS/2 v1.1 had about 39 of them in the DLL directory, each holding useful code.

All in all, there were ~170 files that OS/2 v1.1 consisted of. Versus 3 in PC-DOS.

COMMAND.COM, IBMBIO.COM, IBMDOS.COM..)

Each time an application or part of the OS needs such an useful function, a DLL must be loaded.

Not forever, maybe, but it must be loaded on a free spot in memory so the function(s) can be executed.

That memory must be allocated first, experts would say.

If there's plenty of memory available, the memory manager or loader program can do that quickly.

On our C64-PC with 1MB total, this might not be so easy.

Everything is so crowded, because the user was both penny wise and pound dumb.

DLLs that were already previously loaded, but not currently required, must be unloaded (or swapped-out).

Which causes fragmentation in memory. Then, after clean up, the new DLL can be loaded.

That's similar to what Norton Commander does, with its NCSMALL utility, I assume.

Albeit on a much simpler levels (NC stays unloaded aslong as the application is run, then loaded again).

Other DOS programs of the day used overlay files (OVL), if I remember correctly.

Now, on a 286 we have a little friend called Memory Managment Unit (MMU).

It can help us to manage memory. OS/2 made use of it, unlike DOS did at the time.

Instead of using physical addresses, the MMU allowed the OS to use virtual addresses.

By making use of this, application code nolonger had to be overwritten or copied from A to B.

Instead, pointers/selectors etc changed, as needed. Ironically, this is a bit akin to good old EMS again, which uses mapping.

However, by using virtual memory we were still not freed from having to rely on a storage medium for the actual code/data.

Which, of course, is/was the fixed-disk. Which is/was even slower than RAM. Especially if it was MFM/RLL. With the wrong interleave-factor.

Anyone who ever used an HDD-based EMS simulator (LIMulator) can likely relate to this experience.

We must manage all the code/data that gets swapped to disk and loaded back into memory.

The MMU in the 386 had an extra feature for this - the Paging Unit.

It allowed swapping pretty much on the fly, with way less house keeping work for the OS.

All in all, this ensured that the operating system and the applications could keep woking even if the physical RAM was getting low.

Hower, it didn't mean that having little RAM and big swap file was fine. The virtual memory merely kept the system from collapsing.

It was a support, not a replacement. Batman had Robin as a side-kick, too.

Well, it worked at least if the HDD kept responsing in time. If the time out was too great, the OS could still freeze or completely halt.

That's what still happening with modern OSes, like Windows XP. And it's good, I think.

A frozen system is better than data corruption causes by an overloaded system.

Grzyb wrote on 2023-02-06, 03:27:

The problem was that OS/2 used much more than double or triple of what DOS used...

No, think the problem was that home users had no concept about proportionality.

They applied the same needs of a glorfied bootloader aka DOS to that of a sophisticated operating system.

Or in other words, they tried to carry over their C64 experience in the bed room to a real computer and the environment it operates in.

They think that an OS shouldn't need more resources than Monkey Island or Commander Keen running on a fast PC/XT (-> 286 with 1MB).

To them, a program either fits into memory or it does not. The game runs or it does not. But that's not how things work in real life.

PC compatibles were used for serious tasks, tasks on which things did depend. And these tasks were dynamic, the workload was constantly changing.

Let's just think about it for a moment. A non-home user PC was perhaps doing batch processing in a facility, compiling large code for projects, controlling industrial machinery.

An advanced OS must protect that workflow, must protect itself in this process. So many things may depend on this. It even has a priority-based scheduler, perhaps.

It can't be busy all the time shuffling around code/data in memory in order not to drown, hence the need for RAM. Without RAM, it can't think properply.

Seriously, please visit the links that davidrg has provided us. They give valuable insights.

The view of the ex-Microsoft programmer makes sense, I think (even though I think its not entirely free of biases).

Especially the part in which he describes why OS/2 was tricky to explain to users.

Edit: Speaking about money. Professionals or business people could set off certain things against tax.

If an important investment, say an expensive RAM upgrade was required (which it was, no lie),

then the professional could make an appropriate tax declaration. So he hasn't lost much money, if at all.

This is another fine, but important detail in home user vs professional.

The small, penny wise people or users constantly think about saving money,

whereas the professional or commercial user has to keep an eye on the greater whole.

In this case, the computers in his business must be running, not crawling.

He simply can't afford that.

Grzyb wrote on 2023-02-06, 03:27:[ […]

Show full quote

[

Jo22 wrote on 2023-02-05, 20:50:

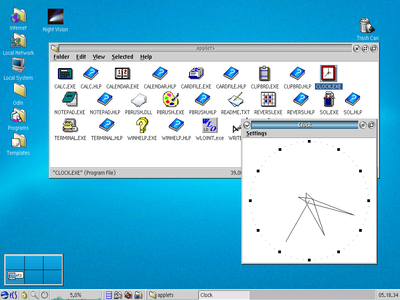

So, you were able to use OS/2 1.x when it was already obsolete.

OS/2 2.0 (1992) was still beyond your reach.

I'm trying to say I had a reasonable basic amount of memory installed at the time, it wasn't ideal.

It was good enough for Windows 3.1 on a 286 in Standard-Mode with a light workload.

For multi-tasking or OS/2, I would have installed more than that, of course (+ a 386 CPU). Same goes for DTP and programming.

Contiguous memory is important to Windows 3.x, at least. Memory fragmentation slows it down a lot.

Edit: Edited a few times. Still not completely okay, I'm afraid. To err is human.

And to really mess things up needs a computer. 😉