First post, by radiance32

Hi all,

I've veered into C/C++ development for 16bit 8086 DOS targets,

and I am currently experimenting with graphics.

After reviewing all the tools out there, I have finally, after trying many options,

started my project with OpenWatcom 1.9 on my Windows 10 PC,

using DOSBOX as my execution & testing environment for my compiled executables.

I'm currently writing a 3D wireframe rasterizer and I am currently using a

fast line algorithm in C++ that sets pixels using the graph.h graphics library

that comes with Watcom.

This library is somewhat similar to BGI (Borland Graphics Interface) for old Borland language compilers (although it's definately not compatible).

My target hardware uses a CGA display adapter,

and I can, with the Watcom graph.h library, initialize and set the CGA adapter to 640x200 mono,

and then have my line drawing algorithm set the pixels directly on the GPU using it's _putpixel() function.

However, I need double buffering, first because single buffering causes the model to be built while all the lines are drawn on the screen in say a second or so,

and with double buffering I can just set the bits in a memory array and then copy the array to the CGA adapter to display it.

Also, setting many individual bits in memory is much faster than setting many individual bits in graphics memory.

But, I've tried everything I can think of, and I just can't figure out the layout of the memory.

// Initialize the adapter to to HIRES CGA:

_setvideomode(_HRESBW);

_setcolor(15); // draw in white

struct videoconfig vc;

_getvideoconfig(&vc);

int width = vc.numxpixels;

int height = vc.numypixels;

// Then, I grab a copy of the framebuffer to get the right size for my videobuffer array:

long videobuf_size = _imagesize( 0, 0, width-1, height-1 ); // This value turns out to be 16006 (CGA HIRES mode requires 16KB so that makes sense, what the additional 6 are I don't know..

// Allocate the buffer

char *videobuf = (char*) malloc( videobuf_size );

And my main renderloop basically consists of these buffer related operations:

// Clear the buffer

for(int i=0; i<videobuf_size; i++) {

videobuf = 0;

}

// I then cast my char* buffer to a bool* buffer, so I can address the individual bits of it's monochrome bitmap

bool *bitbuf = (bool*) videobuf;

// Loop through my triangles, transform them and then draw lines

...

// The individual locations of the pixels for the line algorithm are set as such:

// set pixel at x and y on:

bitbuf[( y * (width-1) ) + x] = true;

// Once it's finished, I copy the buffer from memory to graphics memory to display it:

_putimage( 0, 0, videobuf, _GPSET );

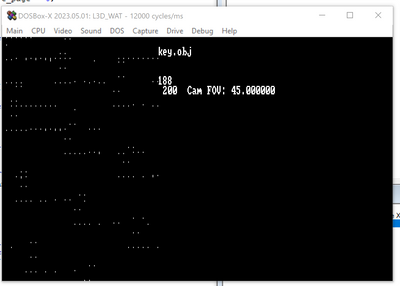

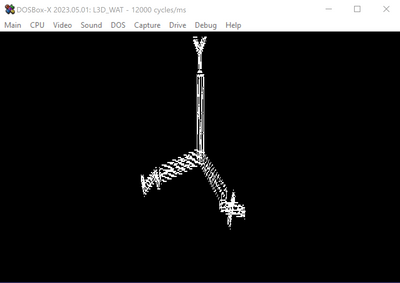

IMO this should work, but, as you can see in the attached image, the whole thing is a mess of vertical lines.

I'm thinking that CGA memory does'nt work like I think it does and it would be awesome if someone here

could give me a hand to fix this as I've tried many things and nothing works...

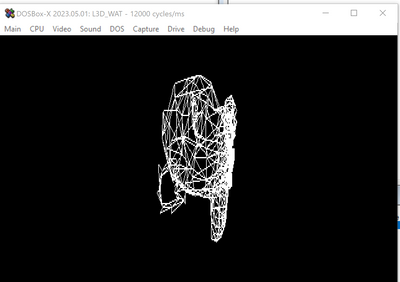

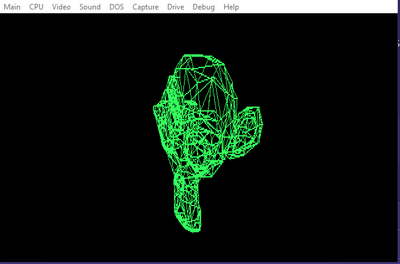

I've attached an image of what it should look like (the blender suzanne monkey being rasterized),

and what it looks like when using my video buffer technique, a garbeled mess...

Thanks!,

Terrence

Check out my new HP 100/200LX Palmtop YouTube Channel! https://www.youtube.com/channel/UCCVChzZ62a-c4MdJWyRwdCQ