douglar wrote on 2021-05-14, 17:29:

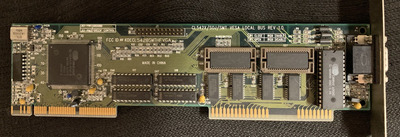

Looks like your Trident VGA card is older and it has different crystals for each frequency, while the newer tridents have a single crystal and a PLL to generate different refresh rates.

Cirrus GD-542x series also uses a PLL with a 14.318Mhz input to generate its pixel and memory clocks. (This was chosen because a 14.318Mhz clock is available "for free" on the ISA bus.)

It's a "fractional N" PLL, so if I understand correctly it first multiplies the input clock by a configurable amount M and then divides it by a configurable amount N to produce the final frequencies. As a result the card is never going to hit the exact VGA/SVGA clocks, but the result will be within 1-2% of the correct frequency.

At one point I was reading through this card's driver code from XFree86 and there were comments indicating that certain M/N combinations weren't stable or could cause interference. (Especially if the pixel and memory clocks were close to each other.)

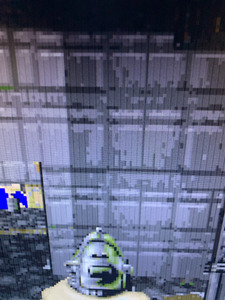

I think SVGA cards that use a PLL to generate the clocks will look bad on a modern digital display. My theory: the sampling clock in your display is *more accurate* than the card's PLL. So the digital display is expecting VGA pixels to arrive at exactly 25.175Mhz but the card's PLL is producing pixels at say 25.181Mhz. This small difference obviously doesn't matter one bit to a CRT whereas a digital display might end up trying to sample a 'pixel' at the same moment that the card's RAMDAC is switching between two pixel values.