Reply 40 of 53, by Scali

wrote:I have repeated the procedure, this time […]

I have repeated the procedure, this time

- recording the 500Hz file instead of the piano piece

- recording at both of the speeds that the Tandy 1000 TX supports

- choosing the 40 column text mode for improved legibility on the composite output.

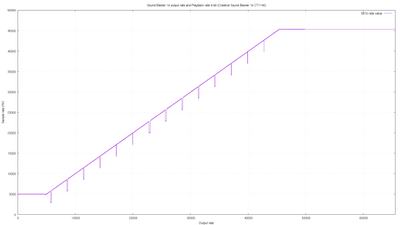

While the piano piece did not sound so bad, the sine wave exposes the choppiness of the output without mercy.

Thanks! So as James-F already mentioned, this confirms my expectations: a v1.x DSP does not work differently from v2.x or v3.x ones.

So I can use these routines without a problem for cards that only support single-cycle mode.

And the 'no busy wait' version seems to be the best one.

It assumes that when the card signals the interrupt at the end of the buffer, that the DSP is not in 'busy' mode, so it write the first command byte right away, instead of first reading back the status byte and checking the busy-flag. This saves a few precious cycles on very slow machines (eg 8088 4.77 MHz), to reduce the glitch to a minimum.

And it also shows that the 'DSP hack' is useless in practice, it glitches far more than a well-optimized interrupt handler.

wrote:Why would anyone think that this might happen? The DSP does not know the internal state of the DMA controller.

That's what I thought too. Under normal circumstances, the device doesn't know or care about the difference between single-transfer and auto-init modes on the DMA controller. But well, this is what they say on osdev: http://wiki.osdev.org/ISA_DMA

Some expansion cards do not support auto-init DMA such as Sound Blaster 1.x. These devices will crash if used with auto-init DMA. Sound Blaster 2.0 and later do support auto-init DMA.

I don't know where this 'wisdom' came from, but I didn't want to rule out the remote possibility that it is true until I had confirmation on actual hardware.

Well, your videos show that my program obviously does not crash your SB, and it only uses auto-init DMA.

I mean, there was a theoretical possibility that there was a bug in the DSP code, where it may have tried to fetch another byte after the DMA reached terminal count, which would then confuse the DSP firmware and crash.

But apparently there isn't. So osdev is wrong, they seem to confuse the 'auto-init' mode of DMA with the 'auto-init' command for the DSP.

If you would send the auto-init command to the DSP then it probably still would not crash, but it would be an unknown command for it, so it would simply never start playing the sample, and as a result, it would never trigger an interrupt either. Which, if your code relies on that interrupt occurring, could deadlock your program in some way, so you could say it 'crashed'.

But we have busted that myth now, osdev is simply wrong.

wrote:As it happens, setting the DMA controller into auto-init mode even when the DSP is programmed for single-cycle mode is a good workaround for yet another hardware error of the Sound Blaster 16 DSP, which occasionally requests one more byte than it should, causing sound effect dropouts in Wing Commander II, Wolfenstein 3-D and Jill of the Jungle.

Yes, anything newer than the SB Pro 2.0 seems quite bugged with single-transfer mode, and I suppose using auto-init is the best solution there.

That is also the approach I want to take: I use auto-init where possible, and the single-cycle routine here is merely a fallback for older DSPs.