analog_programmer wrote on 2024-03-14, 03:20:

Most of the chips of the early 3D consumer videocards are not much worse than Voodoo1 add-on 3D card on paper, but 3dfx turned out to have the best drivers and support in win9x and games.

Above is somewhere between hilarious and disingenuous.

No filters, broken filters, ugly filters.

Missing essential blending modes, atrocious blending implementations (and I dont just mean stippled alpha).

Missing Z-buffer.

Abysmal performance when using basic features like blending or Z-buffering.

Inability to use all features at the same time.

Both texture and fillrate performance listed at flat non shaded non blended not textured not z buffered not subpixel corrected best case scenario vs 3DFX delivering 500-1000 K triangles 16bit color no matter the mode at steady 50 Mpixels/sec.

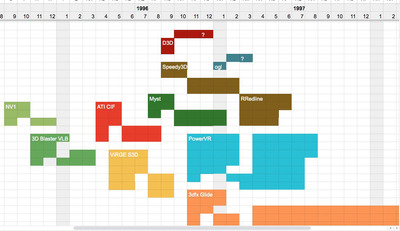

The only 3dfx contemporaries worth mentioning when it comes to actually deliverable performance were PowerVR and Rendition. The rest didnt count at all leaving Voodoo1 unchallenged until Nvidias second try.

Ozzuneoj wrote on 2024-03-14, 03:56:

These days lots of old games have source code available to help with direct optimization from that end, so it really comes down to whether the resources exist to either reverse engineer or work from source code to build better drivers for some cards. If a tiny driver team only had 6 months to a year to work on the 3D portion of a graphics driver AND communicate with game developers before they had to move on to the next thing it seems logical that over a longer period of time (a year or more), one or two hobbyists could understand that device better than the original developers ever had an opportunity to.

Things like Virge, Trident, Laguna3D, Mystique, Ati Rage are simply designed wrong, as a joke https://www.youtube.com/watch?v=d696t3yALAY This is all gaming at ~10 fps with artifacts territory.

So the answer to your question is yes, but only for PowerVR and Rendition, and with limited success and huge difficulty.

First one delivers great performance using a neat trick and only in some narrow circumstances thanks to its non standard tiled rendering engine. The trick is to be smart and massage incoming triangle data to do as little actual rendering work as possible. Behaves best in deferred renderer scenarios or when presented with neatly prepared sorted front to back whole triangle buffer all at once. Game engines that draw world part after part object by object suffer a lot because PVR has no chance to optimize its data access patterns and is forced to draw everything.

Rendition is entirely different approach, its a Soft GPU. You need to write optimized firmwares per particular work load to extract maximum performance, like VQuake.

Afaik the secret of 3dfx performance was its brute force strength. 3dfx doesnt rely on any single data optimization trick, it just steamrolls all triangles/pixels thru its pipeline as is and only at the very end decides if already fogged shaded textured blended mip mapped pixel corrected pixel of a triangle passes Z-buffer or not. There is 5-20% performance difference between turning every single option (texturing, fog, shading, filtering, sub pixel) on or off. There is zero difference when enabling/disabling alpha blending and Z-buffer, those are free. You can feed 3Dfx triangles back to front all day long and performance wont budge. You dont have to worry about _any_ optimizations beyond spitting triangle coordinates to a Glide library and keeping to ~50Mpix fillrate and ~10K tringles per frame.