Hanamichi wrote on 2020-10-24, 01:27:Yeah it's just doing nothing in the garage. I bought it cheap for it's unique ability to de-embed 8ch HD Audio digitally to mult […]

Show full quote

darry wrote on 2020-10-24, 00:08:

Hanamichi wrote on 2020-10-23, 15:16:

Ah that's right IXOS is probably aimed at the UK and Europe. I've seen some bad and some good KVM monitor cables that might be cheap.

I am hoping to utilise an AJA IO HD as a capture device with a hackintosh but there is little mention of supported 4:3 resolution upper and lower limits in the manual.

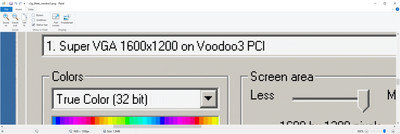

If you already have AJA IO HD , why not, but I hope you realize that it does not natively support RGB video capture or even non sub-sampled video. Then manual says RGB output is possible, but YPbPr is the unit's native format and there are only references to 4:2:2 , never 4:4:4 , so the RGB must be generated from 4:2:2 YPbPr . It might not support 70Hz input either (nothing about this in the manual) . Finally, the unit runs over Firewire 800 , which is limited to 800Mb/second, which is 100MB/second . Uncompressed YUV 4:2:2 video at 640x400@70Hz is about 35MB/second and uncompressed YUV 4:2:2 video at 1024x768@60Hz is about 90MB/second, which means anything above 1024x768 will need to be compressed using a lossy codec ("visually lossless", if you believe the marketing).

Yeah it's just doing nothing in the garage. I bought it cheap for it's unique ability to de-embed 8ch HD Audio digitally to multiple SPDIF outputs hooked up to an older AV receiver.

It does seem to convert to YPbPr color space so not ideal for screenshots I agree. Only looking to capture at 800x600 max so thought it was worth trying out and nice to free up a pcie slot. I was thinking 10bit 4:2:2 would be good enough for Youtube as once uploaded it would end up 10/8bit 4:2:0.

I guess capturing RGB 24bit or higher and integer scaling 4x then uploading is the best way?

Hopefully I have fixed my Epiphan DVI2PCIe board I have so I can assess the IO HD performance against the best.

DISCLAIMER : I am not an expert in video or image processing so my understanding may well be incorrect, please correct me if wrong .

This applies to computer generated graphics with hard edges, which is essentially the worse case scenario for sub-sampling, AFAIK :

Ideally, to negate the effect of the sub-sampling, you would need to integer scale by a a factor of 2 or more BEFORE video capture, otherwise you will just end up making the sub-sampling artifacts more noticeable . Since 320x200 is already line-doubled to 640x400, that should look OK . Everything else will be affected by sub-sampling unless line-doubled at the source or by an intermediate device, like an OSSC.

In then end, if it ends up as 4:2:0 on Youtube or elsewhere, capturing it as 4:2:2 or even 4:2:0 should not be a significant issue as long as you do not upscale (integer or otherwise) the video if it has already been sub-sampled . Upscaling sub-sampled video will just increase the visibility of the sub-sampling's image re-construction artifacts . Up-sampling video BEFORE it is sub-sampled will minimize the visual effects of the sub-sampling .

For example, if you take an RGB image and integer scale (line double) it by 2x on each axis (preserving aspect ratio), each original pixel will become 4 identical pixels . If you then 4:2:0 sub-sample this image, chroma will only be sampled once every 2 pixels on each axis, but since every other pixel is identical to the one preceding it (due to the integer scaling before the sub-sampling), there is no actual information lost (or nearly so, because the RGB to YPbPr conversion that occurs prior to sub-sampling is lossy and alignment between the integer scaling an sub-sampling can play a role as well ).

I am curious about the audio de-embedding . Does it not care about SCMS and is it low latency enough to allow real-time listening when synchronized to a video track ?