jakethompson1 wrote on 2021-07-31, 01:21:

Code would have to make 16-bit accesses to video RAM to actually take advantage of a 16-bit video card, right? Is it possible this was rare in the early days of VGA? Maybe something to do with planar memory?

The more advanced operations of the EGA/VGA graphics controller, like changing the color of only some of the 8 pixels in a 4-plane byte, only work with 8-bit accesses. They make use of an internal buffer (called latch) in the graphics controller, which is just 8 bits per plane, i.e. 32 bits total. Only operations where you rewrite consecutive whole bytes and don't rely on the internal latch.

There are some modes where consecutive bus bytes are mapped to the same VGA memory address, but different planes. The most prominent examples are the text mode, where you have the character code in plane 0 and the attribute byte in plane 1 (at the same address). A 16-bit VGA card could take a 16-bit write, and split it over the two planes. The other prominent exaple is the 256-color mode, which uses a mode where four(!) consecutive bytes on the ISA bus are mapped into the same VGA memory address, essentially using only 1/4 of the VGA memory. A 16-bit VGA card can take a 16-bit write, and distribute it over planes 0+1 or 2+3, depending on address.

Actually, the things I wrote in the previous paragraph are not just "could", but actually it seems to be implemented in some VGA cards. I benched some Trident cards (simple models without FIFO) in different modes recently, and found that data rates improve with 16-bit transfers only in text modes and non-planar modes (like the 256-color mode), whereas 16-bit is no faster than 8-bit in planar graphics modes (16-color mode).

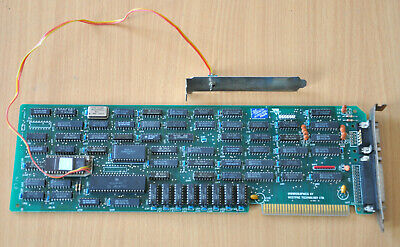

The most compelling reason the original EGA design only has 8 data bits is very likely that IBM designed the EGA card in a way that all the custom chips fit in a 40-pin DIP case. Chips in this form factor could be manufactured by basically any decent chip factory, while chips in bigger cases required special tooling. That's why they sliced the graphics processor (that processes the 8 bit from/to the ISA bus and the 32 bits from/to the video RAM during drawing operations) into two parts, that process only 16 bits each. This is the cause why the EGA card has the strange "graphics position #0" and "graphics position #1" I/O ports that are only described as "must be written 0" and "must be written 1": These ports only access one of the two graphics controller halves each, and tell that controller for what bits it is responsible. All other accesses are handled in parallel by both graphics controller chips. Adding a 16-bit data channel to the CPU would have exceeded the 40-pin limit on the graphics controller again, and considering that the EGA card was already a full-sized board, compensating this problem by splitting the graphics controller into four instead of two parts was not an option, and even if it were, it would have raised the cost a lot.

So we have a historic reason for the EGA to be 8-bit (cost, card space) that caused the whole planar mode programming model to be centered on 8-bit accesses. Later cards (Super EGA / VGA / early super VGA) had to implement the same 8-bit-centric programming model, so implementing them as 8-bit cards makes a lot of sense.